Feb. 8, 2015 – University of Utah researchers have received $1.4 million to further develop an implantable neural interface that will allow an amputee to move an advanced prosthetic hand with just his or her thoughts. The neural interface will also convey feelings of touch and movement.

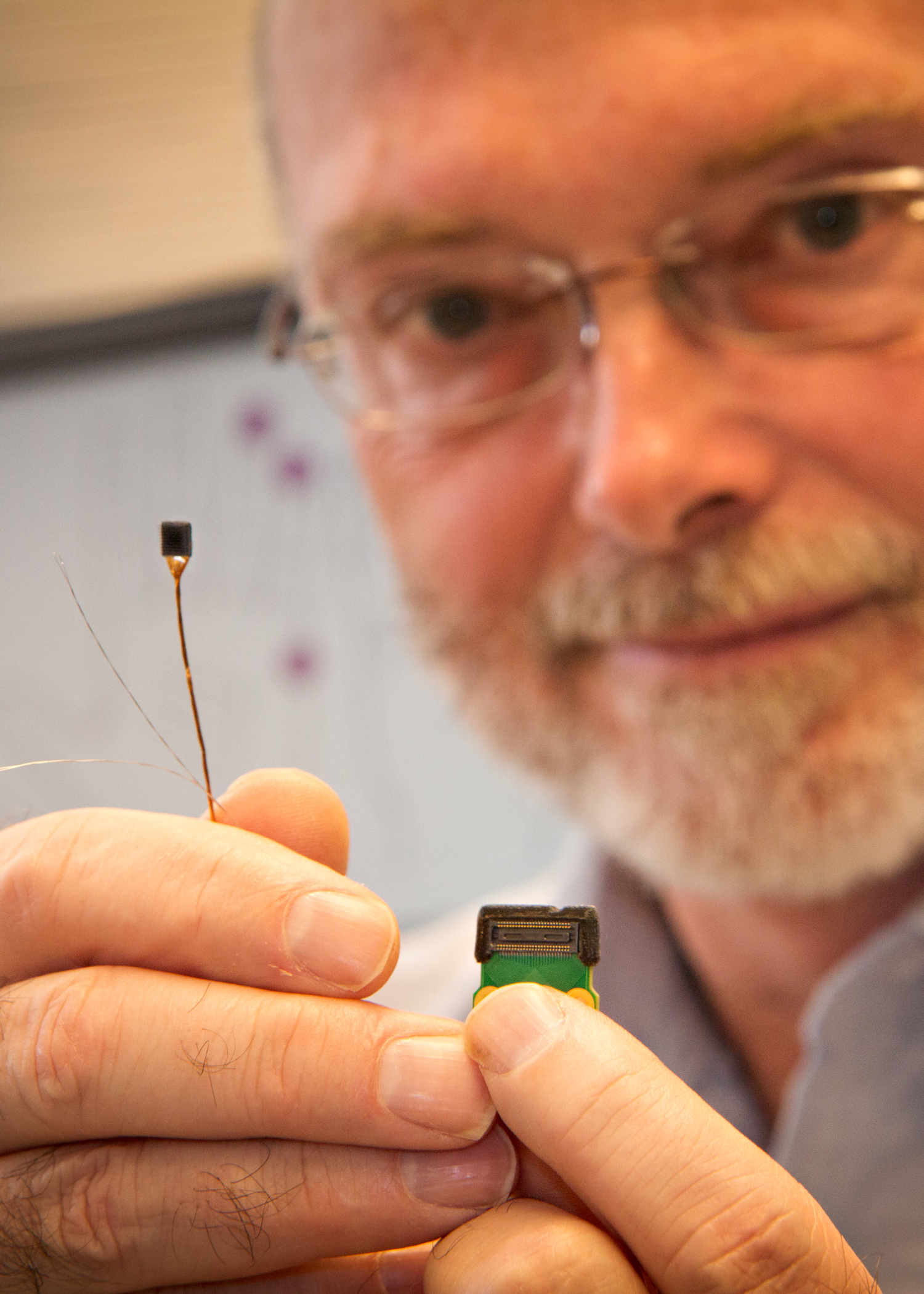

Called the Utah Slanted Electrode Array, the neural interface uses 100 electrodes that connect with nerves in an amputee’s arm to read signals from the brain telling the hand how to move. Likewise, the neural interface delivers meaningful sensations of touch and movement from a prosthetic hand back to the brain.

“Imagine wiretapping into those nerves, which are like a hotline between the brain and the body,” said U bioengineering associate professor Gregory Clark, who is leading the research team involving neuroengineers, material scientists, electrical and computer engineers, surgeons and rehabilitation specialists. “We can pick up the nerve signals, translate them, and relay them to an artificial hand. People wouldn’t have to do anything differently from what they’d already learned how to do their whole life with their real hand. They’ll just think what they normally think, and the prosthetic hand will move.”

The Utah Slanted Electrode Array was first developed by University of Utah bioengineering professor emeritus Richard Normann and ultimately will communicate with the prosthetic limb wirelessly. The U will not develop the prosthetic hand or the wireless electronics. The wireless technology will be developed by local company and U collaborator, Ripple, LLC, in Salt Lake City, and the electrodes for the array are being manufactured by Blackrock Microsystems in the U’s Research Park.

The funding from the Defense Advanced Research Projects Agency (DARPA) will cover about 18 months of research and pay for testing on two human volunteers. The Utah team is eligible to receive up to $4.4 million from DARPA over the next five years.

Current prosthetic limbs can make only limited movements via remaining muscles, such as with a shoulder shrug. Clark hopes that the Utah Slanted Electrode Array will give users of this advanced prosthetic hand over 20 types of hand and wrist movements by using electrical signals from remaining nerves and muscles. The sensory feedback afforded by the array might help the user not only to feel, but also to feel whole again, Clark said. Most conventional prosthetic limbs also provide no sense of touch or movement.

“We have the opportunity to not only significantly improve an amputee’s ability to control a prosthetic limb, but to make a profound, positive psychological impact,” DARPA program manager, Doug Weber, said in a news release. “Amputees view existing prostheses as if they were tools, like a wrench, used only to perform a specific job, so many people abandon their prostheses unless absolutely needed. We believe [the new prosthetic limb] will create a sensory experience so rich and vibrant that the user will want to wear his or her prosthesis full time and accept it as a natural extension of the body.”

Listen to The Scope Radio podcast on brain-machine interface technology for controlling prosthetic limbs. Bioengineering professor Greg Clark talks about the technology’s potential, how it works, and the emotional impact of receiving a prosthetic that feels like a native body part.

The funding is part of DARPA’s Hand Proprioception and Touch Interfaces (HAPTIX) program, which aims to create an artificial limb that is so realistic, it can provide a psychological benefit to the wearer. President Barack Obama cited the project in his State of the Union Address in January as an example of the kind of technological innovation that can unleash new jobs while also benefiting people.

The new tests on human subjects will build upon shorter DARPA-funded tests recently conducted on four human subjects at the U. These tests successfully demonstrated people’s ability to control and receive sensation from a virtual prosthetic hand displayed on a computer monitor, as shown in this online video. So far, the arrays have provided the user with up to 10-12 different types of movements of the virtual fingers and wrist plus over 130 different locations and types of touch and sensory feedback. The use of interfaces in peripheral nerves to control prosthetic hands was pioneered in earlier studies by U investigators, including present U team member Douglas Hutchinson.

The funding will be shared with U startup, Blackrock Microsystems, and University of Chicago assistant professor Sliman Bensmaia and his team, who are developing the interface’s sensory algorithms.

Other U faculty team leaders include research associate professor Loren Rieth and professor V. John Mathews, both from the electrical and computer engineering department; bioengineering research assistant professor David J. Warren; Hutchinson, an associate professor of orthopaedics; Christopher C. Duncan of physical medicine and rehabilitation; and bioengineering professor Normann.